Categories

Summary

Human Activity Recognition simulator using Federated Learning with on-device PyTorch Mobile inference, and server-side Deep Learning inference. Includes an Android app and a Flask API to compare FL vs DL approaches on the UCI HAR dataset

About Project

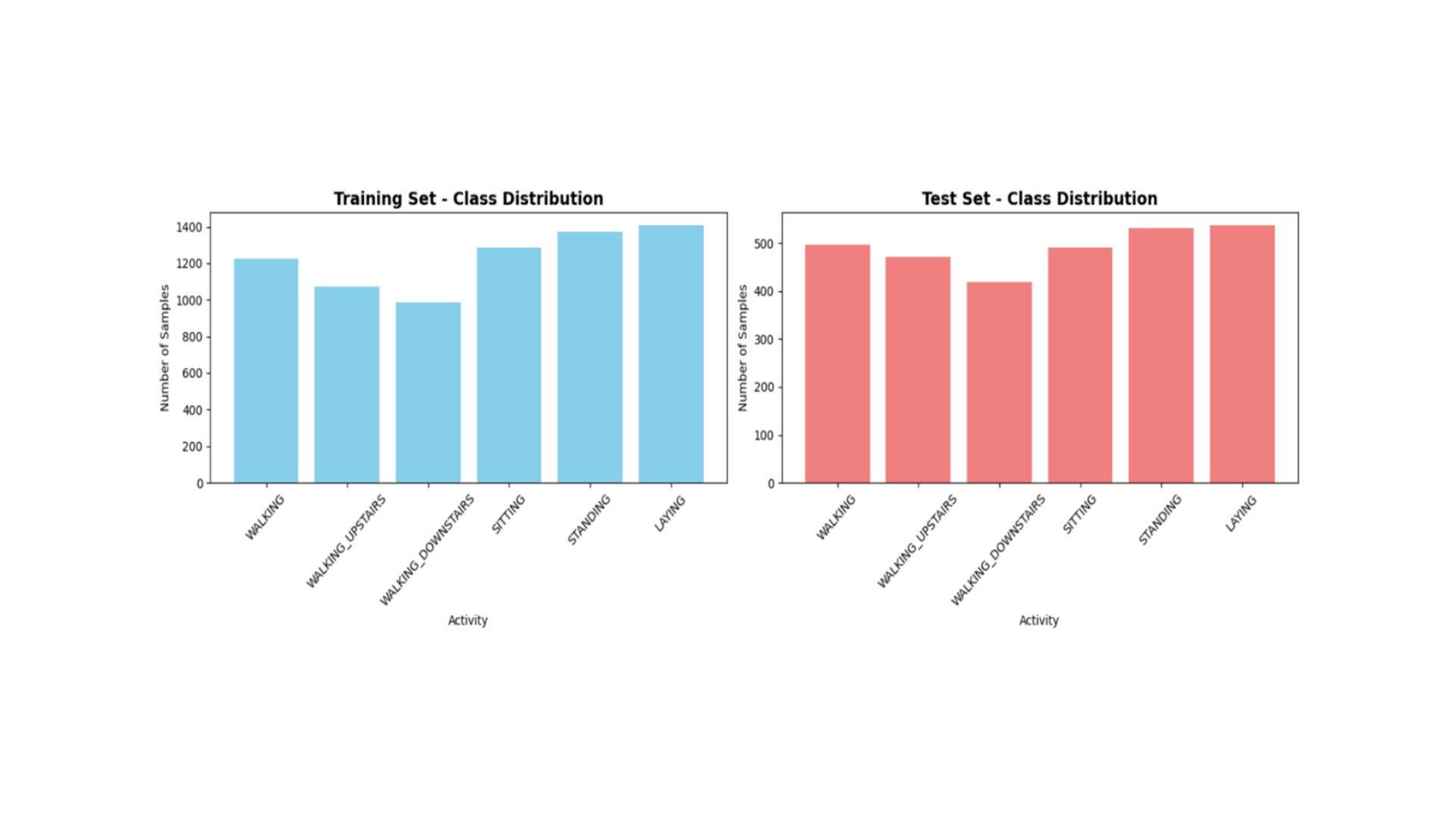

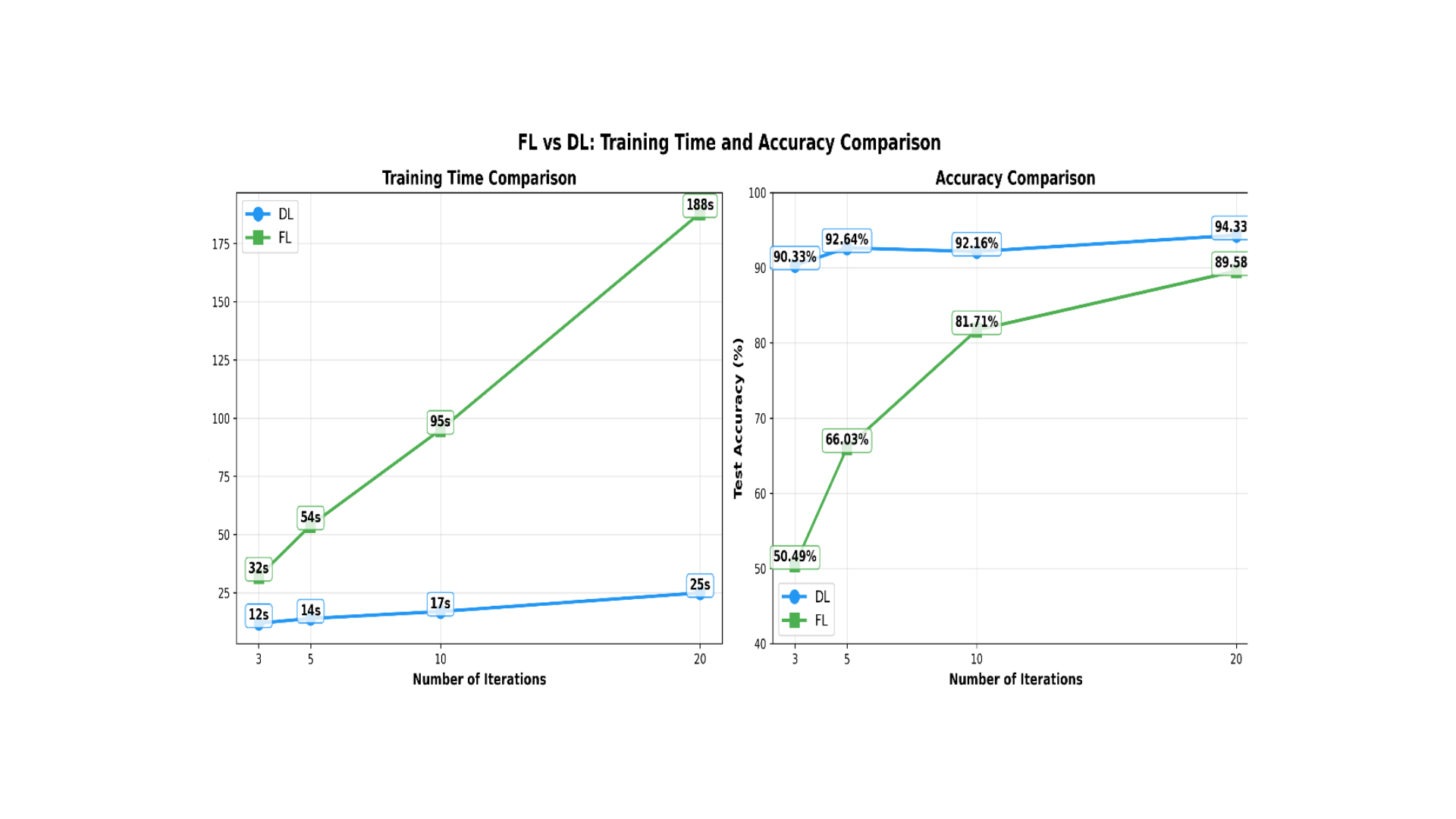

This thesis project delivers an end-to-end Human Activity Recognition (HAR) system built around two machine learning paradigms — centralized Deep Learning and privacy-preserving Federated Learning — evaluated comparatively on the UCI HAR Dataset (561 features, 6 activity classes, 10,299 samples). The Model Training layer implements both architectures using TensorFlow/Keras and PyTorch in Jupyter notebooks, producing trained models with performance benchmarks across accuracy, precision, recall, and F1-score metrics. The Flask REST API exposes 17 endpoints organized via SOA principles — route controllers (Blueprints), dedicated service components (DeepLearningService, FederatedService, GradientAggregator), and shared utilities for preprocessing, validation, and metrics tracking. The Android Mobile App, built with Kotlin and Jetpack Compose (MVVM), collects real-time inertial sensor data, performs on-device FL inference via PyTorch Mobile, and communicates with the API through Retrofit. Users can trigger predictions, submit feedback to update the federated model, and review classification history.

Links

Created Date

December 13, 2025